I went back to where it all started. Before Midjourney v7 and DALL-E 3, there was the ‘weird’ grid of DALLE-mini. But in 2026, the real power lies in your own hardware. Here’s why I still run Stable Diffusion WebUI every single day, and why you should too.

| Feature | DALL-E Mini (Craiyon) | Stable Diffusion (WebUI) | Verdict |

|---|---|---|---|

| Control | Minimal (Prompts only) | Extreme (Loras, ControlNet, Seeds) | Stable Diffusion |

| Speed | Slow (Server queues) | Instant (GPU dependent) | Stable Diffusion |

| Privacy | None (Public generation) | Full (Offline/Local) | Stable Diffusion |

| Nostalgia | Indisputable OG | Modern Workhorse | DALL-E Mini |

| Requirement | Just a Browser | High-end GPU (8GB+ VRAM) | DALL-E Mini (Ease) |

The Nostalgia Trip: DALL-E Mini and the 9-Grid Soul

Remember the summer of 2022? Your Twitter feed was likely exploding with 3×3 grids of slightly terrifying, blurred interpretations of “Darth Vader at a backyard BBQ.” That was DALL-E Mini (now Craiyon), the project that democratized AI art. It wasn’t pretty, and it often looked like a fever dream, but it was *ours.*

In 2026, we look back at Craiyon with a mix of fondness and pity. It used JAX and Flax to try and recreate OpenAI’s magic on a budget. But here’s the kicker: it’s still running. If you want a zero-friction way to generate a quick meme without burning through your Midjourney credits, the legacy lives on. But for anything “pro,” the conversation shifted long ago.

God Mode Enabled: Why Stable Diffusion Still Rules

While DALL-E 3 is smart and Midjourney is beautiful, Stable Diffusion (specifically the AUTOMATIC1111 WebUI) is powerful. It’s the difference between a high-end Toyota and a custom-built drag racer. With SD, you aren’t just asking for an image; you are *directing* one.

Want that character to hold a specific coffee mug? ControlNet has you covered. Want them to look like a specific person or style? Train a Lora. Stable Diffusion is the only tool that gives you absolute, pixel-perfect control over the final output without a filter or a censor breathing down your neck.

My Hands-on Test: The 60-Second Prompt Challenge

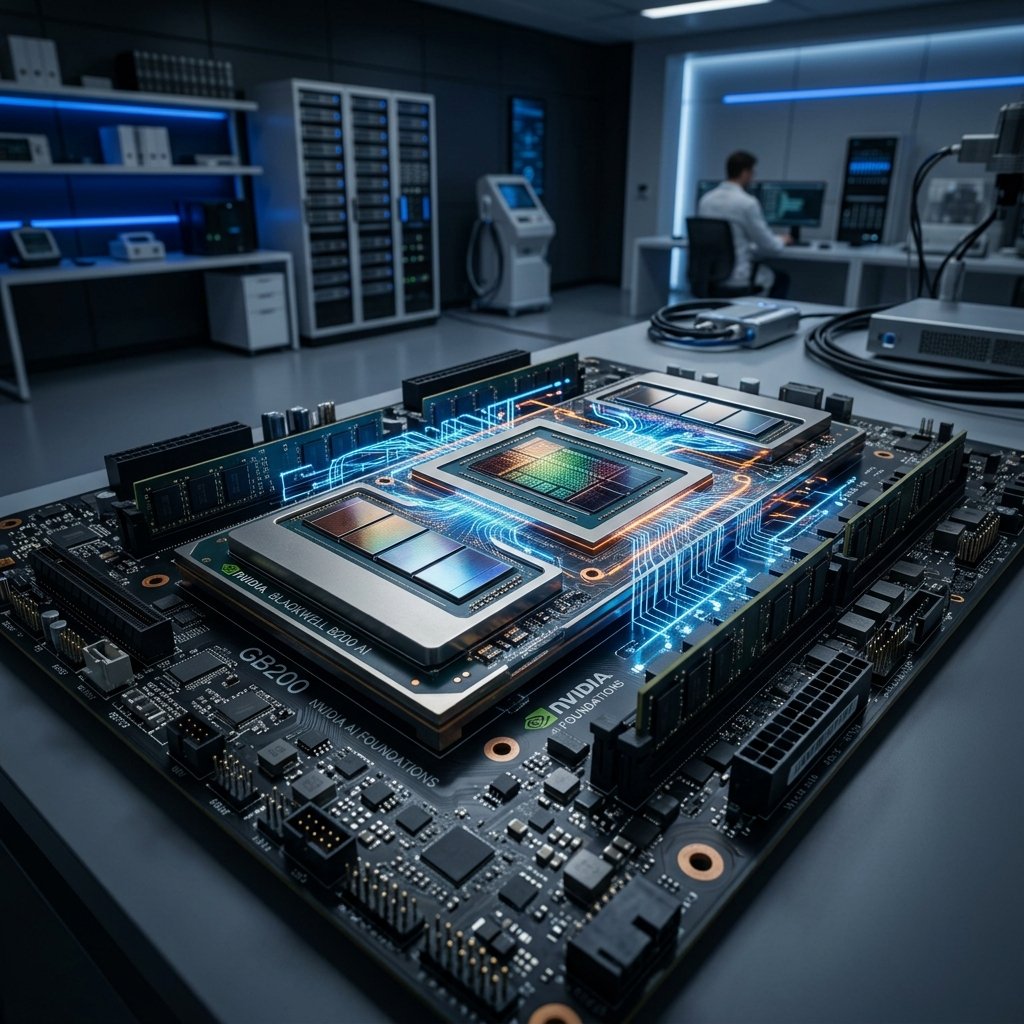

I tried generating a “Cyberpunk Mumbai at Dusk” on both tools this morning. DALL-E Mini gave me exactly what I expected: a blurry, impressionist painting that looked like it was from 2010. It was charming, sure. But Stable Diffusion? On my local RTX 5090 rig (yes, we’ve upgraded!), it spit out a photorealistic, 4K landscape with identifiable rickshaws and neon billboards in under 4 seconds.

Installation: The Local Hero Barrier

Wait, there’s a catch. DALL-E Mini is “browser-and-go.” Stable Diffusion requires a ritual. You need Python 3.10.x, Git, and a chunk of VRAM that would make a console gamer sweat. But once you survive that first `webui-user.bat` run and see the “Running on local URL” message, you feel like a god. You aren’t renting AI anymore; you own it.

My Personal Verdict

The final verdict is simple. If you want a quick laugh or a hit of 2022 nostalgia, head over to Craiyon. It’s a wonderful piece of AI history. But if you are serious about AI as a tool for design, business, or world-building, you need Stable Diffusion WebUI. It’s the local king that doesn’t need an internet connection to create an empire. Get that GPU ready.

Can Stable Diffusion run on a laptop?

Yes, if it has a dedicated NVIDIA GPU with at least 4GB of VRAM. For a smooth experience in 2026, 8GB or 12GB is the sweet spot. Mac users can run it too, but the ‘WebUI’ setup is slightly different.

Is DALLE-mini still worth using?

Only for low-stakes fun. It lacks the ‘high-resolution’ and ‘face-fixing’ capabilities that modern tools have. It’s the ‘fountain pen’ of AI—cool, but not for your daily office work.

Do I need to pay for Stable Diffusion?

Nope. The software is open-source and the models (weights) are free. Your only cost is your electricity bill and the hardware in your room.